In an era where digital interaction defines our communication, understanding the varying levels of artificial intelligence is crucial. Are all chatbots the same, or do they represent a complex hierarchy? Explore how these terms differ, and uncover why this knowledge is more vital than ever in the age of automation.

Contents

The Traditional Chatbot – Where It All Begins

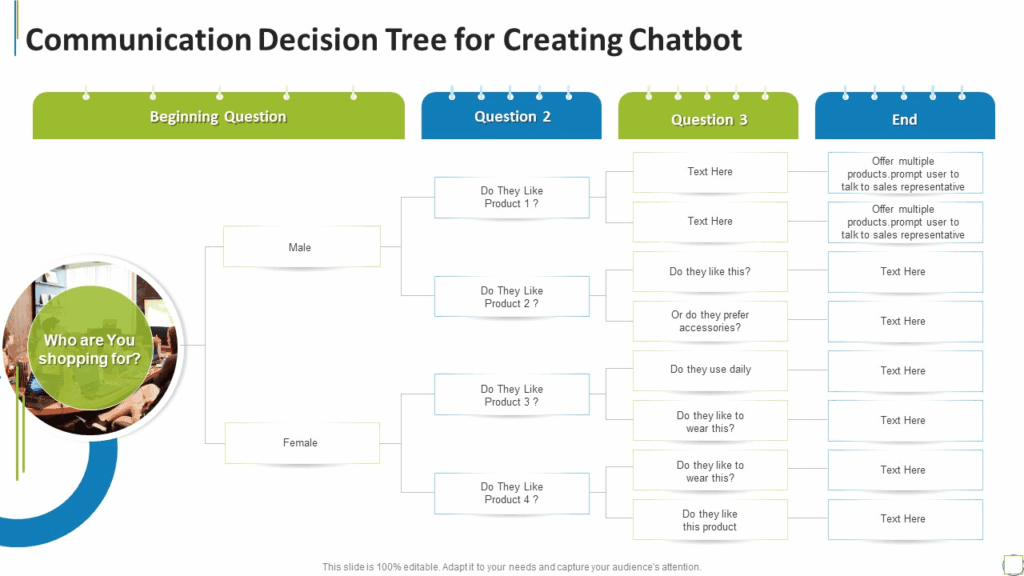

Traditional chatbots operate on a rule-based framework, relying on predefined scripts and conditional logic processes (Known as Conversational Trees)

Think of them as a flowchart:

- If the user says “Hello,”

- The bot responds with “Hi there!”

- If the user selects an option, it follows a specific path.

- The bot responds with “Hi there!”

- For instance, in customer service, a chatbot might guide users through multiple steps in selecting a product for a gift

However, this simplicity is both a strength and a limitation. While rule-based bots excel in structured environments, like handling FAQs, they falter when faced with unexpected queries. Imagine a customer asking, “What’s the best product for my needs?” A traditional bot might respond with irrelevant information or loop back to the main menu, frustrating the user. This rigidity highlights a common misconception: that all chatbots can handle complex interactions. In reality, their effectiveness diminishes outside scripted scenarios, revealing the need for more advanced solutions as user expectations evolve.

Pros:

- Cheap and fast to build: Minimal dev effort using no-code platforms or basic scripting.

- Predictable responses: Great for compliance, legal, and scripted workflows.

- Low risk: No hallucinations or unexpected behaviour.

Cons:

- No flexibility: Breaks easily if users go off-script.

- No learning: Can’t improve or adapt over time.

- Poor UX: Often frustrating, especially for complex or ambiguous queries.

The point here is that it can work well, but it’s all pre-configured and takes careful design

AI Chatbots – The Next Level of Interaction

AI chatbots powered by large language models (LLMs) represent a seismic shift in user interaction. Unlike their rule-based predecessors, these advanced systems leverage natural language processing (NLP) to facilitate more fluid and open-ended conversations. Imagine a customer service scenario where a user asks about a product’s compatibility with other devices. An LLM-powered chatbot can understand the nuances of the question, engage in follow-up queries, and provide tailored responses, creating a dialogue that feels less robotic and more human.

However, this leap in conversational capability comes with significant challenges. One major limitation is the lack of memory and persistent context. While LLMs can generate contextually relevant responses within a single session, they often fail to retain information across interactions.

For instance, if a user discusses their preferences today but returns tomorrow for further inquiries, the chatbot won’t remember past interactions. This can lead to frustration as users must reintroduce themselves and their needs repeatedly.

Consider the application of AI chatbots in healthcare. A patient might engage with a chatbot to schedule an appointment and discuss symptoms. The chatbot can provide immediate assistance but won’t recall the patient’s previous concerns during future interactions unless explicitly programmed to do so. This lack of continuity can hinder the overall user experience.

Moreover, while LLMs excel at generating human-like text, they can sometimes produce unexpected or irrelevant answers due to misinterpretation of context or ambiguity in user queries. This unpredictability challenges businesses to implement robust training protocols and continuous monitoring to ensure quality interactions.

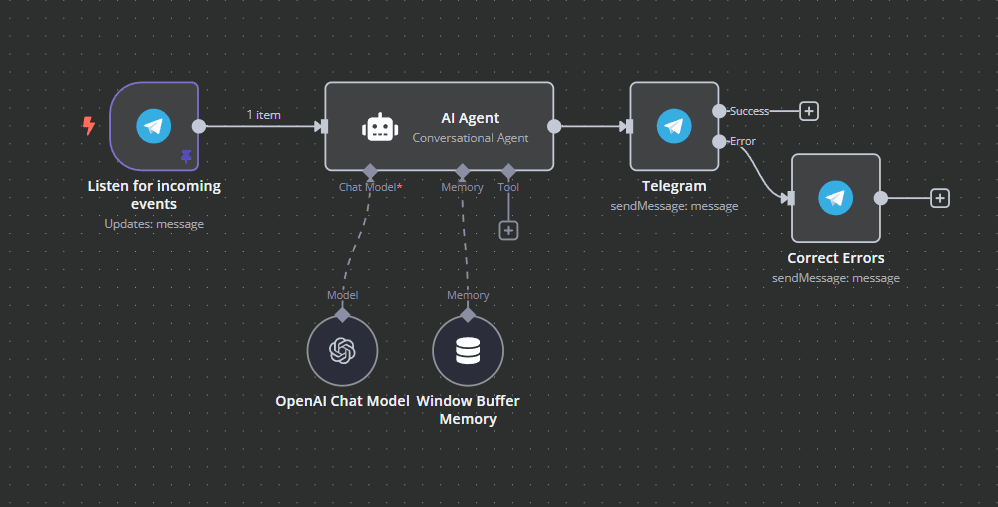

This is my Telegram AI agent that I built in n8n using OpenAI’s API. In this case I use local memory to have some useful outputs

Pros:

- Conversational fluency: Can handle open-ended queries and sound human.

- Versatile: Can summarise, translate, rephrase without custom training.

- Quick to deploy: APIs like OpenAI’s or Anthropic’s make this almost plug-and-play.

Cons:

- No memory or continuity: Can’t track progress or learn user preferences unless explicitly engineered.

- Hallucinations: Confidently makes up facts without source-grounding.

- Limited actionability: Can’t do anything. Only talks to the user

In summary, AI chatbots powered by LLMs elevate user experience through enhanced conversational capabilities but grapple with memory limitations that can disrupt continuity and personalisation. As organisations adopt these technologies, they must navigate these challenges to maximise their potential while delivering seamless interactions.

AI Agents – The Autonomous Assistants

AI agents are not just reactive tools; they are becoming autonomous assistants that anticipate user needs and take initiative. Unlike traditional chatbots, which respond to specific queries, these agents can autonomously manage tasks, streamline workflows, and learn from interactions. For instance, tools like Microsoft’s Cortana or Google Assistant can schedule meetings, send reminders, and even adjust your smart home settings without explicit commands.

Imagine an AI agent that monitors your email for important messages. It could prioritise tasks based on deadlines and context, automatically drafting responses or flagging items for follow-up. This proactive capability is a game-changer for busy professionals who juggle multiple responsibilities.

However, AI agents’ autonomy introduces orchestration challenges. They need to coordinate seamlessly across various platforms and applications. For example, an agent managing your calendar must integrate with email services, task management tools like Asana or Trello, and communication platforms like Slack. If these integrations falter or if the agent misinterprets data, it can lead to scheduling conflicts or missed deadlines.

Moreover, overconfidence is a significant concern. AI agents may take actions based on incomplete information or misjudgments about user preferences. Picture an agent that books a flight without confirming your availability. This can lead to frustration rather than efficiency.

To mitigate these issues, developers must implement robust feedback loops and memory utilisation strategies. An effective AI agent should remember past interactions to refine its understanding of user preferences while allowing for corrections when it missteps. This balance between autonomy and oversight is crucial for ensuring that AI agents enhance productivity rather than hinder it.

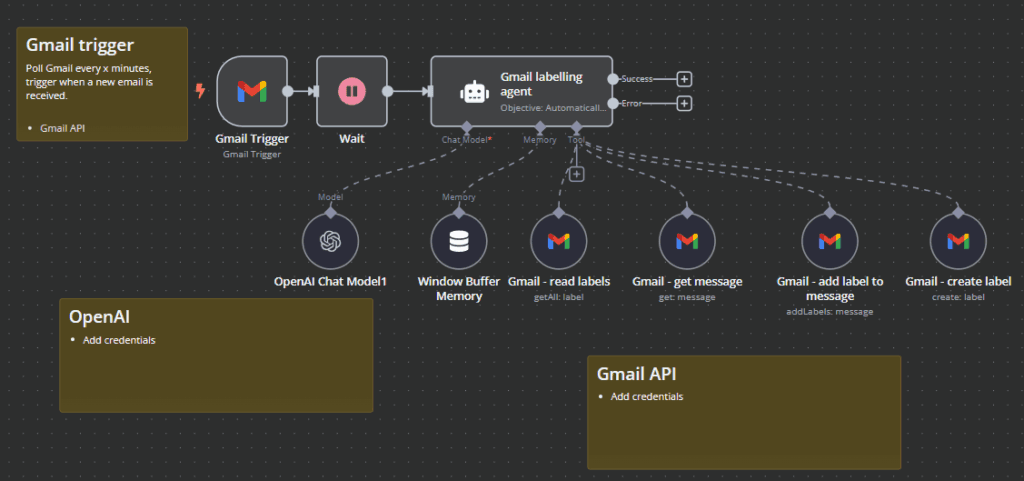

Here is a Gmail AI Agent that I am using on my private email. In this case, I am using it to tag my emails

Pros:

- Can act, not just talk: Executes tasks like sending emails, retrieving data, or triggering automations.

- Stateful: Maintains context and memory across sessions or workflows.

- Highly customizable: Developers can chain actions, tools, and logic around a core LLM.

Cons:

- Harder to build and debug: Tool orchestration introduces fragility.

- Still unpredictable: LLMs can take the wrong action or misinterpret instructions.

- Slower latency: Multiple steps and API calls can increase response time.

In summary, while AI agents offer remarkable capabilities in task automation and proactive assistance, their orchestration challenges and potential overconfidence require careful management to truly serve users effectively.

Agentic AI – The Future of Intelligent Action

Agentic AI represents a paradigm shift in intelligent systems. Machines not only execute tasks but also learn from their experiences, adapting in real time. The core of this capability lies in feedback loops and recursive improvement. These mechanisms allow AI to assess its performance, identify errors, and refine its strategies continuously.

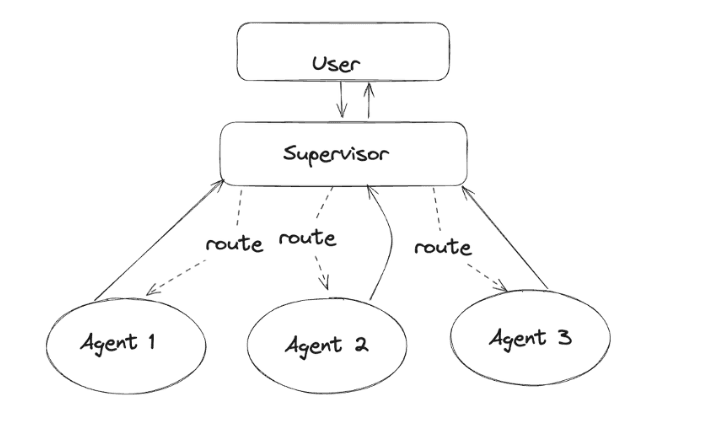

The concept that resonates the most with me is something called Multi-Agent patterning. In this case, the user interacts with a supervisor agent, who, in turn, delegates further to sub-agents, specifically prompted with a specific role and goal. As you have seen, the more specific you are with your prompts, the better the out comes are, so by having specific agents roles doing specific tasks you get a much higher quality output

- The supervisor agent is goal-driven, not just rule-driven.

- The sub-agents operate with some autonomy, tool use, and memory.

- The system as a whole can:

- Plan, prioritise, and adapt.

- Delegate tasks based on context (e.g. choosing the right agent based on skill or current load).

- Course-correct when things go wrong (e.g. retry strategies, reassignment).

- The agents interact asynchronously or dynamically, not in a static pipeline.

This aligns with how some people define “agentic AI”: autonomous systems that pursue goals, make decisions, and organize sub-tasks without hardcoding every step.

Easiest way to understand it is to see it in action:

Pros:

- End-to-end autonomy: Can handle complex, multi-step tasks with minimal supervision.

- Planning and reasoning: Breaks down goals, creates sub-tasks, reprioritises.

- Future-proof concept: Represents the next frontier of intelligent software systems.

Cons:

- Very experimental: Prone to failure, looping, or unsafe behaviour.

- Hard to control: Unpredictable decisions; unclear boundaries between autonomy and compliance.

- Ethical and trust issues: Can make decisions with real-world consequences without sufficient transparency.

As we push toward realising agentic AI’s full potential, imagine an intelligent front-desk agent, not just scheduling meetings, but anticipating needs based on past interactions, coordinating across departments, adjusting calendars based on shifting priorities, and even recognising when a visitor’s request falls outside the norm and escalating it appropriately. It’s adaptive orchestration. The possibilities are vast, but they demand careful navigation through the complexities of alignment and reliability to ensure these intelligent agents serve humans effectively and ethically.

Example: AI-Powered Receptionist Using MCP

Imagine an AI receptionist agent responsible for managing a busy front desk at a consulting firm. On its own, the receptionist can greet visitors, schedule meetings, and notify employees. But things get interesting when it’s plugged into a multi-agent system powered by MCP.

Model-Context-Protocol (MCP) is a key enabler for scaling simple agents into truly agentic systems. By standardising how agents define their capabilities (Model), share and interpret relevant information (Context), and interact with each other (Protocol), MCP transforms fragmented toolchains into cohesive, goal-oriented ecosystems. Instead of brittle hardcoded chains, agents can dynamically understand each other’s roles, reason about shared state, and coordinate actions. This allows a supervisor agent to delegate subtasks not just based on predefined flows, but through real-time negotiation and shared understanding, pushing the system toward generalizable, adaptive intelligence.

Without MCP:

- The receptionist has a hardcoded rule: if someone asks for Jane, check her calendar and send a Slack message.

- If Jane is unavailable or there’s a last-minute client reschedule, the system can’t adapt.

- Or the developer has to build custom tools

With MCP:

- The Receptionist Agent (RA) defines its Model: “I handle scheduling, visitor check-ins, and employee notifications.”

- When a VIP client arrives, RA uses the Context (Jane is double-booked, the visitor has high priority) and triggers a Protocol:

- It queries the Calendar Agent (CA) for Jane’s availability and recent changes.

- It negotiates with the Scheduling Agent (SA) to reshuffle internal meetings.

- It pings the Notification Agent (NA) to alert Jane and her assistant with updated context.

- If necessary, it escalates to the Human Override Agent with a packaged summary of the conflict.

Because each agent shares a common protocol, they can reason about tasks, negotiate solutions, and adapt to real-world messiness—without brittle one-off logic.

Understanding the distinctions between various technologies is not just academic; it’s a critical skill for navigating our increasingly digital lives. For instance, recognising the difference between machine learning and traditional programming can reshape how we approach problem-solving. Machine learning adapts and evolves, while traditional programming follows rigid rules. This awareness empowers you to leverage technology effectively, ensuring you harness its full potential rather than being overwhelmed by it.

Consider how this knowledge influences your daily interactions with AI-driven tools. Are you merely using them, or are you engaging with them strategically? As technology continues to permeate every aspect of our lives, your expectations must evolve, too. Demand transparency, adaptability, and ethical considerations from the technologies you use.

In a world where technology shapes our decisions and experiences, understanding these nuances is not just beneficial; it’s essential for fostering a future where technology serves humanity rather than dictates it.

Conclusions

The spectrum of digital interfaces ranges from simple rule-based chatbots to sophisticated agentic AI. Recognising these distinctions is not just academic; it informs how we engage with technology today and shapes the innovations of tomorrow. The future is here

Are you ready to embrace it?